I have been writing content professionally for over a decade. And if there is one thing that has made the biggest difference to my results, it is not better writing. It is not a bigger vocabulary. It is not even SEO.

It is A/B testing.

When I first started testing my content, I made a lot of mistakes. I tested the wrong things. I stopped tests too soon. I ignored the data and went with my gut. And I paid the price with weak conversion rates and flat performance.

But once I understood how A/B testing really works in the context of content writing, everything changed. My email open rates improved. My landing page conversions went up. My call-to-action (CTA) clicks increased. And I stopped guessing.

In this article, I want to share everything I know about A/B testing in content writing. What it actually is. Why it matters for conversion rate optimization. What specific elements you should test. And how to run a proper test step by step.

This is written from real experience, not theory.

What Is A/B Testing in Content Writing?

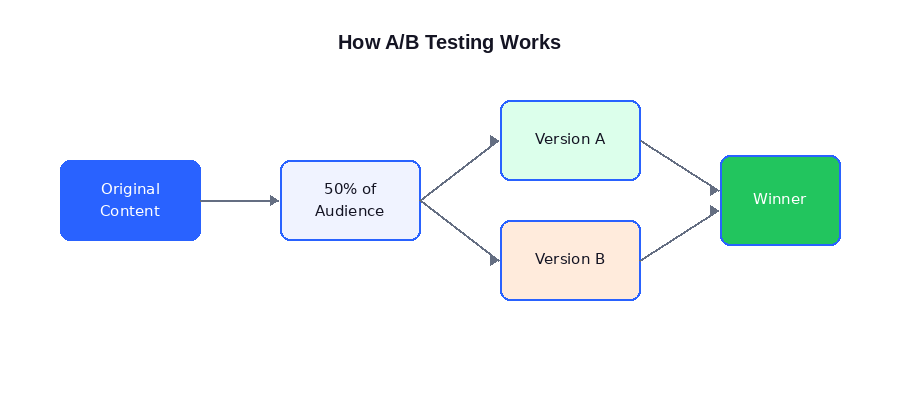

A/B testing, also called split testing, is the process of creating two versions of the same piece of content and showing each version to a different segment of your audience. You then measure which version performs better against a defined goal.

Version A is usually your original or current content. Version B is the variation you want to test. Your audience is split randomly. Half sees A, half sees B. After a set period or once you have enough data, you compare the results.

The goal is to make content decisions based on evidence, not assumptions.

How A/B Testing works: from original content to a clear winning version

In content writing, A/B testing applies to many things. Headlines. Email subject lines. CTAs. Intro paragraphs. Formatting. Tone. Even the length of your content. Each of these elements directly impacts how readers behave. And small changes can have a surprisingly large impact on your conversion rate optimization results.

| Think of A/B testing as asking your audience a silent question: Which version of this content do you prefer? And then letting their behavior give you the answer. |

Why A/B Testing Matters for Content Writers

Most content writers create content based on best practices, personal preference, or instinct. And that is a fine starting point. But best practices do not account for your specific audience, your unique product, or the context of your content.

What works for one brand may not work for yours. What works this year may not work next year. Your audience is not a static group. Their preferences shift. Their attention changes. And the only way to keep up is to test.

- Here is what A/B testing gives you that intuition never will:

- 1. Headlines and Titles

- 2. Call-to-Action (CTA) Copy

- 3. Introduction Paragraphs

- 4. Email Subject Lines

- 5. Content Format and Structure

- 6. Tone and Voice

- 7. Social Media Copy

- 8. Meta Descriptions

- 9. Content Length

- 10. Visual Elements and Image Placement

- Step 1: Define Your Goal

- Step 2: Form a Hypothesis

- Step 3: Create Your Variants

- Step 4: Set Your Sample Size and Duration

- Step 5: Run the Test

- Step 6: Analyze and Implement

- Mistake 1: Testing Too Many Variables at Once

- Mistake 2: Stopping the Test Too Early

- Mistake 3: Not Having a Clear Metric

- Mistake 4: Ignoring Statistical Significance

- Mistake 5: Not Documenting Your Tests

- For Email:

- For Web Pages and Landing Pages:

- For Social Media:

- Here is how A/B testing content fits into a CRO workflow:

Here is what A/B testing gives you that intuition never will:

- Real data from real users, not assumptions

- Specific insights about what drives conversions for your particular audience

- The confidence to make content changes with a clear reason behind them

- A feedback loop that makes your content continuously better over time

- A way to tie content decisions directly to business outcomes

I have seen writers spend weeks perfecting a headline, only to have a simpler, plainer version outperform it. I have seen minimal CTAs beat flashy ones. I have seen short intros crush long storytelling openers in email campaigns.

None of that is obvious without testing.

And in the world of conversion rate optimization, A/B testing is one of the most reliable tools available. It removes the ego from content decisions and replaces it with evidence.

What to Test: The 10 Core Content Elements

Not everything is worth testing. You want to focus on elements that have the most direct impact on reader behavior and conversions. Here are the ten core areas I test in my content work.

10 key content elements you can A/B test to improve performance

1. Headlines and Titles

The headline is the first thing anyone sees. It determines whether someone clicks, reads, or scrolls past. It is the single most important element of almost any piece of content.

What to test in headlines:

- Question-based vs. statement-based (e.g., “Are You Making These Mistakes?” vs. “5 Common Mistakes Writers Make”)

- Numbers vs. no numbers (“7 Tips” vs. “Tips for Better Writing”)

- Benefit-focused vs. curiosity-focused

- Short vs. long headlines

I once tested a headline for a landing page that read “Professional Content Writing Services” against “We Help You Write Content That Sells.” The second version increased demo requests by 37%. Same page. Same offer. Different headline.

2. Call-to-Action (CTA) Copy

Your CTA is the point where interest converts into action. The words you choose matter enormously. “Submit” performs very differently from “Get My Free Guide.” Generic vs. specific. Passive vs. active. Transactional vs. value-driven.

What to test in CTAs:

- Button text (“Download Now” vs. “Send Me the PDF”)

- Placement (top of page vs. bottom vs. middle)

- Single CTA vs. multiple CTAs

- First-person phrasing vs. second-person (“Start My Trial” vs. “Start Your Trial”)

3. Introduction Paragraphs

Your intro sets the tone and decides whether a reader continues. A bad intro, even after a great headline, loses readers in seconds.

What to test in intros:

- A story-based opener vs. a stat-based opener

- Starting with the problem vs. starting with the solution

- Long, contextual intro vs. a short, punchy one

4. Email Subject Lines

In email marketing, the subject line is everything. A/B testing subject lines is one of the most common and high-impact tests content writers run. Most email platforms support this natively.

What to test in subject lines:

- Personalization (using the reader’s name) vs. no personalization

- Emoji vs. no emoji

- Short (under 40 characters) vs. long (60+ characters)

- Question vs. statement vs. teaser

5. Content Format and Structure

How you present content matters as much as what you say. Some audiences prefer bullet points and headers. Others engage more with continuous prose. Testing format can reveal a lot about how your readers actually consume content.

What to test in format:

- Long-form post vs. short-form post

- Bullet-heavy structure vs. paragraph-heavy structure

- Content with images vs. text only

- Content with a table of contents vs. without

6. Tone and Voice

Conversational vs. formal. Playful vs. serious. Direct vs. nurturing. Tone shapes how a reader feels about your brand. And that feeling influences behavior.

I tested a formal product page against a conversational rewrite for a SaaS client. The conversational version increased trial sign-ups by 22%. Same product. Same price. Different voice.

7. Social Media Copy

The same article can generate very different engagement depending on how you write the social post promoting it. Testing hook styles, lengths, and formats on platforms like LinkedIn and Twitter gives you a much clearer picture of what your audience responds to.

8. Meta Descriptions

Meta descriptions affect click-through rates from search results. They are not a direct ranking factor, but they are a major conversion factor. Testing different approaches to meta descriptions is low effort and can produce meaningful gains in organic traffic.

9. Content Length

Longer is not always better. Shorter is not always better. It depends on the topic, the audience, and the platform. Testing content length is especially useful for landing pages, product descriptions, and email newsletters.

10. Visual Elements and Image Placement

Where you place an image, what type of image you use, and whether you use images at all can affect how long readers stay on a page. Testing image styles and placement in blog posts and landing pages is an often-overlooked area of content optimization.

How to Run an A/B Test in Content Writing

Running an A/B test is a process. When I first started, I skipped steps and got messy results. Here is the step-by-step framework I use now, and it works consistently.

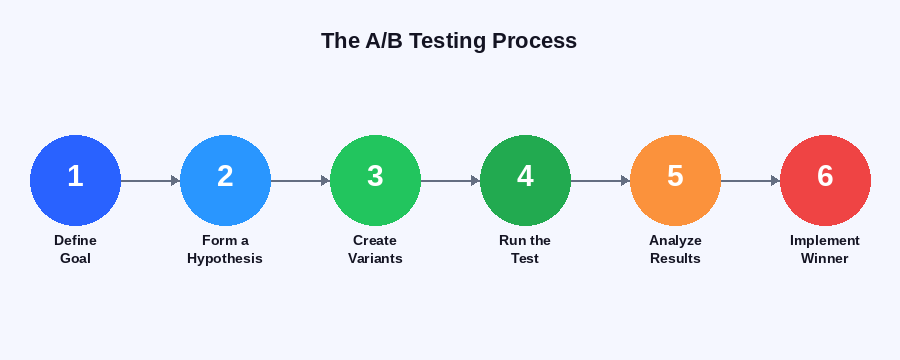

The 6-step A/B testing process for content writers

Step 1: Define Your Goal

Every test needs one clear goal. Not two. Not three. One.

Are you testing to increase click-through rate? Email open rate? Time on page? Sign-ups? Purchases? Be specific before you write a single variation.

Without a defined goal, you will not know what data to look at. And you will end up confused by the results.

Step 2: Form a Hypothesis

A hypothesis is a prediction. It follows this structure: “If I change X, then Y will happen because Z.”

For example: “If I change my CTA from ‘Subscribe’ to ‘Get the Free Guide,’ then my email sign-up rate will increase because the new version communicates clear value to the reader.”

This structure forces you to think about why a change might work. It also helps you learn from the test, even if the result is not what you expected.

Step 3: Create Your Variants

Create Version A (your control, which is the existing content) and Version B (your variation). Test one thing at a time. If you change the headline AND the CTA AND the intro, you will not know which change produced the result.

This is called multivariate testing, and it requires a lot more traffic to be statistically meaningful. For most content writers, stick to one change per test.

Step 4: Set Your Sample Size and Duration

This is where many content writers go wrong. They run a test for three days, see Version B winning, and immediately switch to it. That is a mistake.

Your test needs enough data to be statistically significant. Use a sample size calculator (many are free online) to figure out how many responses you need before the results are reliable. Most tests need at least 100 to 200 conversions per variant to be meaningful. The more, the better.

Also, run your test for at least two full weeks. This accounts for weekly patterns in user behavior.

Step 5: Run the Test

Use a proper tool to split your audience randomly. Do not manually send Version A to Monday readers and Version B to Friday readers. That introduces bias.

For email, most platforms (Mailchimp, ConvertKit, ActiveCampaign) have native A/B testing built in. For web pages, tools like Google Optimize (or its successors), VWO, or Optimizely handle this. For social media, many scheduling tools now include A/B testing features.

Step 6: Analyze and Implement

Once you have enough data, look at your defined metric. Which version performed better? By how much? Is the difference statistically significant?

If Version B wins, implement it. Document what you tested and what you learned. Build that knowledge into your next test. If Version B loses, that is also valuable. You learned what does not work for your audience.

| The winning version is not the end of the process. It becomes the new control. Then you test again. Good A/B testing content strategy is a continuous loop, not a one-time event. |

5 Common A/B Testing Mistakes I Made (So You Do Not Have To)

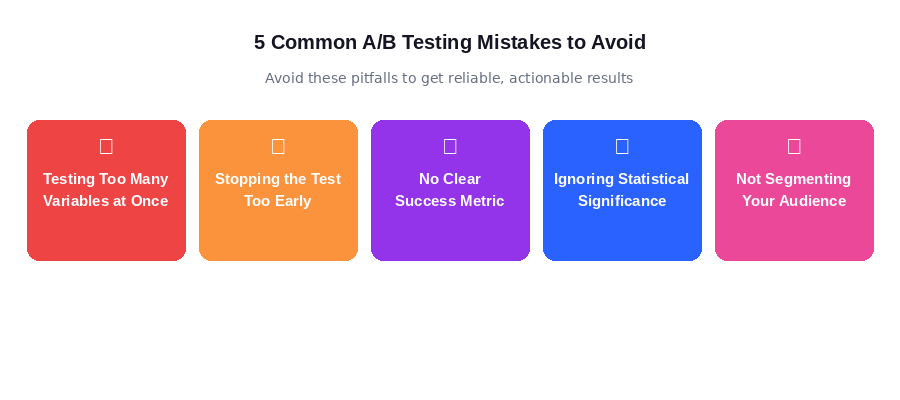

Avoid these 5 pitfalls to get clean, reliable A/B testing results

Mistake 1: Testing Too Many Variables at Once

Early in my career, I changed the headline, intro, CTA, and image in a single test. Version B won. But I had no idea which change made the difference. Now I test one element at a time, always.

Mistake 2: Stopping the Test Too Early

It is tempting to pull the plug when you see a version winning early. But early results are often not representative. Statistical noise can make one version look like a winner in week one and a loser by week three. Wait until you hit your sample size target.

Mistake 3: Not Having a Clear Metric

I once ran a test to “improve performance” without defining what that meant. I got a pile of data and no clear conclusion. Every test needs one primary metric. Define it before you start.

Mistake 4: Ignoring Statistical Significance

A 3% difference in conversion rate sounds meaningful. But if your test only had 50 respondents, that difference might be pure chance. Always check for statistical significance. A 95% confidence level is the standard minimum.

Mistake 5: Not Documenting Your Tests

I used to run tests and forget what I had learned. Now I keep a simple testing log. I record what I tested, my hypothesis, the result, and what I concluded. Over time, this log becomes one of the most valuable assets in my content strategy.

A/B Testing Tools for Content Writers

You do not need expensive software to run good A/B tests. Here are the tools I have used and recommend:

For Email:

- Mailchimp: Built-in A/B testing for subject lines, send times, and content

- ConvertKit: Clean subject line testing with clear result reporting

- ActiveCampaign: More advanced testing options including full email content

For Web Pages and Landing Pages:

- Google Optimize (now sunset) and its successors like AB Tasty

- VWO (Visual Website Optimizer): Full-featured and user-friendly

- Optimizely: Enterprise-level but powerful

- Unbounce: Great for landing page A/B testing built into the page builder

For Social Media:

- LinkedIn’s native campaign manager supports A/B testing ads and organic post variations

- Meta Ads Manager has robust split testing built in

- Buffer Analyze gives insight into post performance comparisons

For most content writers starting out, the built-in testing tools in your email platform and a free landing page tool will cover 90% of your needs.

How A/B Testing Connects to Conversion Rate Optimization

Conversion rate optimization (CRO) is the broader practice of improving the percentage of visitors or readers who take a desired action. A/B testing is one of the most powerful tools within CRO.

When I write content now, I think in terms of the conversion funnel. Every piece of content exists to move a reader from one stage to the next. Awareness to interest. Interest to consideration. Consideration to action.

A/B testing helps me identify exactly where readers are dropping off and what change in the content fixes it.

Here is how A/B testing content fits into a CRO workflow:

- Identify a low-performing content asset (high bounce rate, low CTA clicks, poor email open rates)

- Form a hypothesis about what change could improve performance

- Run an A/B test

- Implement the winner

- Identify the next lowest-performing element

- Repeat

This creates a continuous improvement loop. Each test, whether it wins or loses, teaches you something about your audience. Over time, your content becomes sharper, more effective, and more aligned with what your readers actually respond to.

The compounding effect of this process is significant. A 5% improvement in email open rates leads to more readers. A 10% improvement in landing page conversions leads to more leads. A 15% improvement in CTA click rates leads to more sales. None of these seem dramatic in isolation. But together, they transform your content performance.

Quick A/B Testing Checklist for Content Writers

Before you launch your next test, run through this checklist:

- Have I defined exactly one goal for this test?

- Have I written a clear hypothesis?

- Am I testing only one element (not multiple changes at once)?

- Have I calculated the required sample size?

- Is my audience being split randomly?

- Am I using a reliable testing tool?

- Have I set a minimum test duration of at least two weeks?

- Is my primary metric defined and trackable?

- Do I have a place to document my test and results?

If you can check every box, you are set up for a test that will give you clean, reliable data you can actually act on.

Final Thoughts

A/B testing changed how I think about content writing entirely. It shifted my mindset from “I think this will work” to “Let me find out what actually works.”

That shift is powerful. It removes guesswork. It removes ego. It gives you a direct line of communication between your content decisions and your audience’s actual behavior.

The best content writers I know are not just good at crafting words. They are good at understanding data. They use A/B testing content strategies to continuously improve their work. They treat every piece of content as an experiment, not a finished product.

You do not need to be a data scientist to do this. You just need to be curious, patient, and willing to let the evidence guide you.

Start small. Pick one element. Form a hypothesis. Run the test. See what happens. Then do it again.

That is how great content gets made.

Frequently Asked Questions

1. How long should I run an A/B test for content writing?

At a minimum, run your test for two full weeks. This accounts for variations in daily and weekly user behavior. More important than time, though, is sample size. You need enough data points (usually 100 to 200 conversions per variant) to reach statistical significance. If your content gets very low traffic, you may need to run a test for longer to gather enough responses.

2. Can I run A/B tests on blog posts and long-form content?

Yes, but it requires more planning. For blog posts, you can test elements like the headline (using tools like Google Search Console to track CTR), the CTA placement, the intro paragraph, or even the content format. Some content management platforms allow you to serve different versions to different visitors. Alternatively, you can test a series of posts with one variable changed across the set.

3. How do I know if my A/B test result is statistically significant?

You can use free online statistical significance calculators (many SEO and CRO tools offer these). Enter your two sample sizes and the number of conversions for each variant. Aim for at least 95% confidence before calling a winner. Below 95%, the difference may be due to chance rather than the change you made. Never call a winner based on a small sample or a short test window.

4. What is the difference between A/B testing and multivariate testing?

A/B testing compares two versions of a single element, like two different headlines or two different CTAs. Multivariate testing compares multiple elements changed simultaneously, which means you are testing many combinations at once. Multivariate testing requires significantly more traffic to reach significance and is more complex to analyze. For most content writers, A/B testing is the right starting point and handles the majority of practical use cases.

5. What should I do if neither version wins in my A/B test?

A null result is still a result. If there is no statistically significant difference between Version A and Version B, it means that particular change does not meaningfully impact your defined metric for your audience. Take note of it, document it in your testing log, and move on to testing a different element. Not every test produces a clear winner, and that is fine. It helps you narrow down where the real opportunities lie in your content.